We are off to Adelaide for the MAGIC 2010 finals.

Looking forward to meeting the other teams, and hope it doesn't rain!

No Die? No Problem: RealDice.org Has You Covered

47 minutes ago

Also check out Adrian Boeing's webpage.

g++ createhull.cpp hull.cpp -o convex

/Applications/blender-2.49b-OSX-10.5-py2.5-intel/blender.app/Contents/MacOS

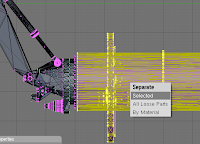

Once the plugin is installed you can start creating convex hulls. You can select an object in blender and cut it into smaller objects to manually decompose the hulls. To do this, you can use the Knife tool (K) or split objects in edit-mode with 'P'. (Selection tools like 'B' and manual alignment of cameras ('N', Numpad 5) will help a lot).

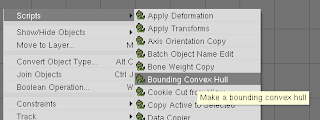

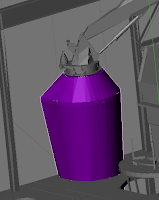

Once the plugin is installed you can start creating convex hulls. You can select an object in blender and cut it into smaller objects to manually decompose the hulls. To do this, you can use the Knife tool (K) or split objects in edit-mode with 'P'. (Selection tools like 'B' and manual alignment of cameras ('N', Numpad 5) will help a lot). Once you have the objects you would like to generate hulls for select one, and run the "Bounding Convex Hull" script. It will ask you to confirm the creation of the hull. The new hull will have the same name as the original object and have ".hull" appended to its name.

Once you have the objects you would like to generate hulls for select one, and run the "Bounding Convex Hull" script. It will ask you to confirm the creation of the hull. The new hull will have the same name as the original object and have ".hull" appended to its name.

(On LOCAL) bzr branch WAMbot WAMbotAdriansBranchIn future, you can keep this branch up to date by using "bzr pull" from the branch.

(On TARGET) bzr push "e:/WAMbotAdriansBranch"from the bzr directory on the robot.

(On LOCAL) bzr merge X:\WAMbotBranch

theta += omega * dt; x+=v*dt*cos(theta); y+=v*dt*sin(theta);

xc = x - (v/w)*sin(theta) yc = y + (v/w)*cos(theta)Now we can update the position of the robot:

theta += w * dt; x = xc + (v/w) sin(theta) y = yc - (v/w) cos(theta)We can expand this into a single line as:

x+= -(v/w)*sin(theta) + (v/w)*sin(theta+w*dt) y+= -(v/w)*cos(theta) - (v/w)*cos(theta+w*dt) theta += omega * dt;Which is the form many robotics papers use.

deltaRot1 = atan2(y'-y,x'-x) - theta deltaTrans = sqrt( (x-x')^2 + (y-y')^2 ) deltaRot2 = theta' - theta - deltaRot1

sudo apt-get install gcc sudo apt-get install build-essential sudo apt-get install g++ sudo apt-get install rpm

Missing critical pre-requisite -- missing system commands The following required for installation commands are missing: libstdc++.so.5 ( library)

Missing optional pre-requisite -- No compatible Java* Runtime Environment (JRE) found -- operating system type is not supported. -- system glibc or kernel version not supported or not detectable -- binutils version not supported or not detectableThe JRE you need for the visual debugger, otherwise you can safely continue.

real 0m16.855s user 0m16.849s sys 0m0.004sAnd, with ICC 11.1, with -O2:

real 0m11.369s user 0m11.361s sys 0m0.008s